Hi everyone!

I’m working on a localization system for a vehicle in BeamNG.tech by using the ROS2-BeamNG Integration. I’ve noticed that the GPS outputs latitude and longitude values, but since the BeamNG world is not georeferenced to real-world coordinates, these values are relative.

My main question is:

How can I properly convert GPS readings (lat, lon) into BeamNG world coordinates (x, y)?

I read in the documentation that refLat and refLon can be set when creating the GPS sensor, and they define the origin of the GPS coordinate system. That makes sense… but I still have some doubts about how to get a full transformation, for example:

- My vehicle starts at position

pos = [690.680, 418.680, 0.300] in the BeamNG world,

- and at that location, the GPS reports

latitude = 0.0037657, longitude = 0.0062047,

- how should I use this reference point to convert between GPS and world coordinates?

Is there a standard or recommended approach (e.g., affine transform, scaling factor) to handle this mapping?

My goal is to create a 2D rappresentation in real time of the vehicle movements.

Thanks in advance

Cheers!

Right now i’m doing this:

def gps_listener_callback(self, msg: NavSatFix):

"""

Converts GPS coordinates (lat, lon) into approximate local coordinates (x, y),

and shifts them so the trajectory starts from (0, 0).

"""

lat = msg.latitude

lon = msg.longitude

# Conversion approssimate lat/lon → meters (flat-earth)

meters_per_deg_lat = 111320

meters_per_deg_lon = 111320 * cos(radians(0))

x = lon * meters_per_deg_lon

y = lat * meters_per_deg_lat

# Inizializzation of the origin

if self.gps_origin is None:

self.gps_origin = (x, y)

# Changed axis for rappresentation with other structures

x_rel = -(y - self.gps_origin[1])

y_rel = -(x - self.gps_origin[0])

self.beamng_traj.append((x_rel, y_rel))

But i don’t know how if my approsimation is right, let me know!!

Hi,

thanks for your question.

Depending on what you’re trying to do, a simple approach might work just fine. Mapping between GPS coordinates and the BeamNG world can get tricky, but for many use cases, it doesn’t need to be overly complicated.

A good middle-ground would be to georeference a few known points on the map (like corners or landmarks), and then use basic linear interpolation between them. This usually works well for moderate-sized maps and areas that don’t cover too much latitude.

Since you’re already using the GPS sensor in BeamNG, the math is being done internally to convert world coordinates into GPS. What you need now is the reverse. The nice part is: the sensor also gives you local x and y values (in meters from the reference point you set with refLat and refLon). You can simply use those as approximations for the world x/y positions relative to your set origin.

Unless you’re doing something that requires real-world accuracy across large distances, this should be more than enough—and it avoids dealing with projections like Mercator, which can add unnecessary errors.

Hope this helps.

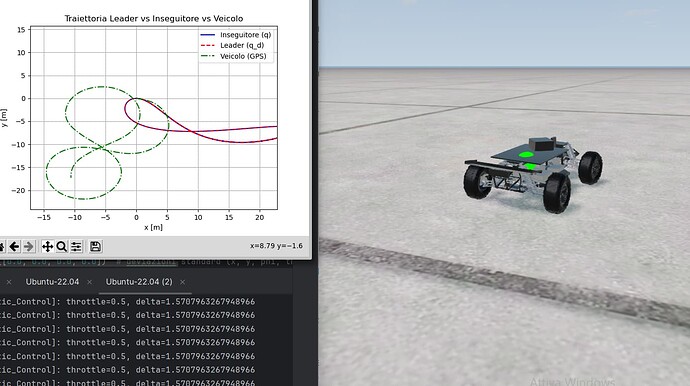

To give some context, I’m working on comparing a bicycle model (i.e., a two-axle vehicle model) with a simulated vehicle in BeamNG integrated with ROS2. The objective is to validate a trajectory tracking controller, where:

- The controller computes steering and throttle commands based on the error between the current state and a desired trajectory (in this case, a Bernoulli lemniscate).

- These commands are sent to the simulated vehicle as follows:

self.send_control_signal(VehicleControl(

throttle=throttle_cmd,

steering=delta_cmd,

brake=0.0,

parkingbrake=0.0,

clutch=0.0,

gear=2

))

To evaluate the controller’s performance, I compare three trajectories:

- The real trajectory (from BeamNG’s GPS sensor),

- The desired trajectory (the Bernoulli lemniscate),

- And the predicted trajectory (from the bicycle model).

My main question is about how to interpret the GPS data provided by BeamNG.

For example, here’s a typical GPS message I receive:

header:

stamp:

sec: 1748083366

nanosec: 573049448

frame_id: ego

status:

status: 1

service: 0

latitude: 0.003726335379933379

longitude: 0.006060487313339069

altitude: 0.0

position_covariance:

- 0.0

...

position_covariance_type: 0

I understand that BeamNG’s GPS sensor internally converts world coordinates into GPS, but what I need is the inverse — converting the GPS output back into local world coordinates (x, y) to compare it with the model output.

You mentioned that the sensor can also provide local x and y values relative to a reference point (refLat, refLon), but I’m unsure:

- Where can I define or retrieve this reference point (

refLat / refLon)? Should it be set in the scenario definition or configured manually?

- Is there a way to access the local x and y coordinates directly from the GPS sensor output, or must I always compute them manually using a flat-earth approximation?

Any clarification or suggestions would be very helpful

Thanks in advance!

Hi, to get the xyz coordinates of the vehicle in the world in ROS2, you can use the /state sensor: StateSensor — BeamNG-ROS2 documentation

1 Like

![]()

![]()